About the Journal

Title: International Journal of Interactive Multimedia and Artificial Intelligence (IJIMAI)

Edited by: Elena Verdú and David Camacho

Published by: Universidad Internacional de la Rioja (Spain)

ISSN: 1989-1660 |DOI: 10.9781/ijimai

Periodicity: quarterly

Content access policy: open access

Editors & Editorial Board | Scientific Committee | Reviewers of the 2024 issues

Subjects: AI theories, methodologies, systems, and architectures that integrate multiple technologies, as well as applications combining AI with interactive multimedia techniques

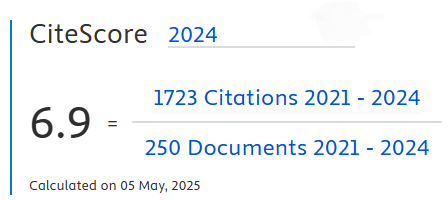

The International Journal of Interactive Multimedia and Artificial Intelligence (IJIMAI, ISSN 1989-1660) is a quarterly open-access journal that serves as an interdisciplinary forum for scientists and professionals to share research results and novel advances in artificial intelligence. The journal publishes contributions on AI theories, methodologies, systems, and architectures that integrate multiple technologies, as well as applications combining AI with interactive multimedia techniques.

Current Issue

This issue brings together contributions that reflect the growing diversity of AI research, covering topics such as information credibility assessment in social media, recommender systems, federated learning and data privacy, medical image analysis, educational technologies, large language models and virtual assistants, intelligent multimedia systems, and environmental monitoring. Collectively, these works illustrate how current AI methods continue to expand across disciplines, addressing both theoretical challenges and real-world problems.